Crowd Datasets

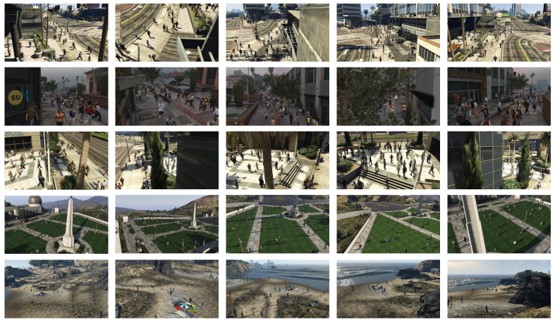

CVCS: Cross-View Cross-Scene Multi-View Crowd Counting Dataset

Synthetic dataset for cross-view cross-scene multi-view counting. The dataset contains 31 scenes, each with about ~100 camera views. For each scene, we capture 100 multi-view images of crowds.

- Files: Google Drive

- Project page

- If you use this dataset please cite:

Cross-View Cross-Scene Multi-View Crowd Counting.

,

In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR):557-567, Jun 2021.

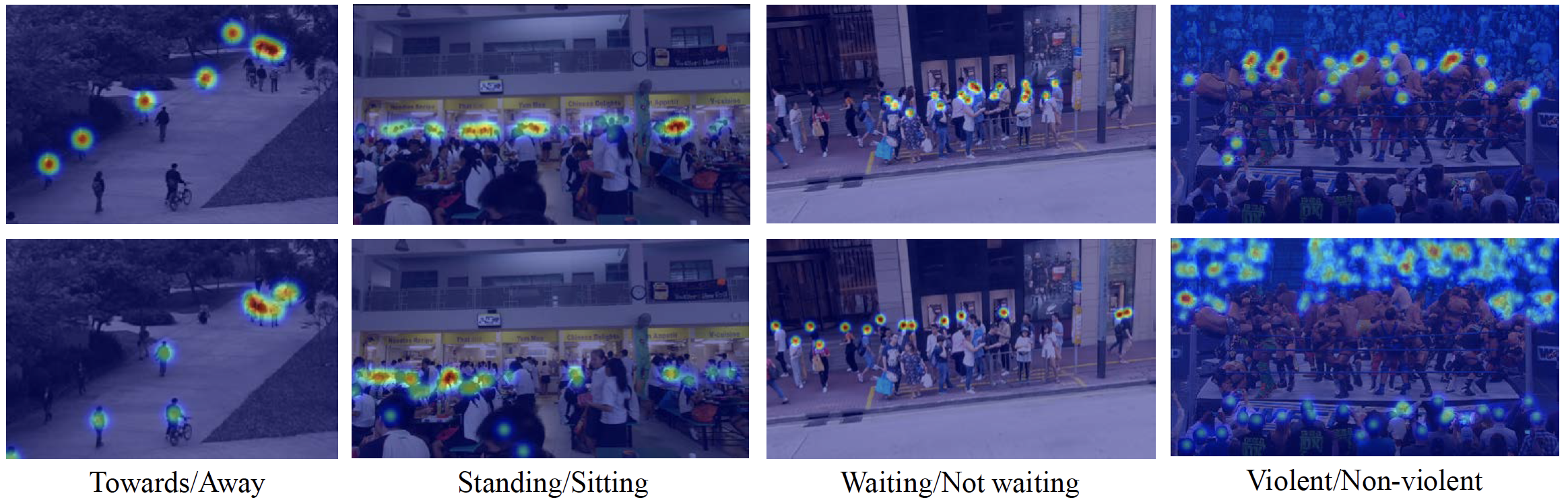

Fine-Grained Crowd Counting Dataset

Dataset for fine-grained crowd counting, which differentiates a crowd into categories based on the low-level behavior attributes of the individuals (e.g. standing/sitting or violent behavior) and then counts the number of people in each category.

- Files: dataset (1.2GB), code

- Project Page

- If you use this dataset please cite:

CityStreet: Multi-view crowd counting dataset

Datasets for multi-view crowd counting in wide-area scenes. Includes our CityStreet dataset, as well as the counting and metadata for multi-view counting on PETS2009 and DukeMTMC.

- Files: download page

- Project page

- If you use this dataset please cite:

Wide-Area Crowd Counting via Ground-Plane Density Maps and Multi-View Fusion CNNs.

,

In: IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), Long Beach, June 2019.

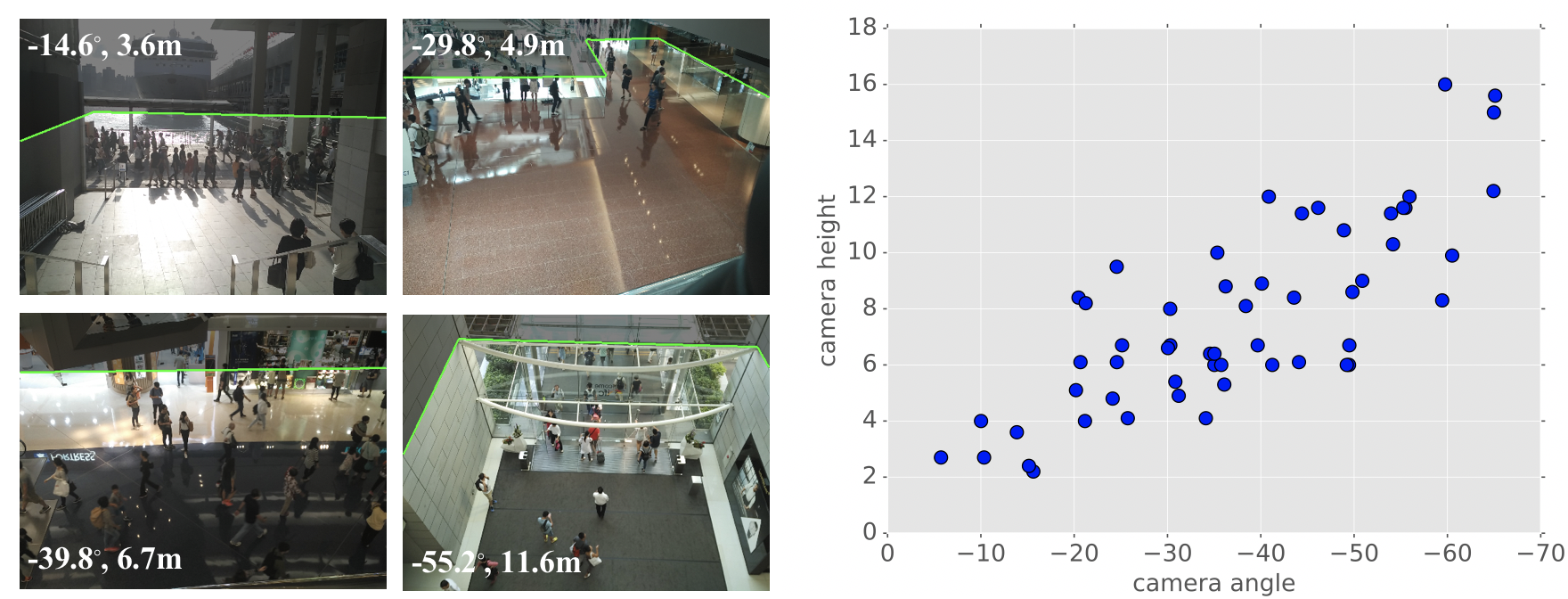

CityUHK-X: crowd dataset with extrinsic camera parameters

Crowd counting dataset of indoor/outdoor scenes with extrinsic camera parameters (camera angle and height), for use as side information.

- Files: zip (1.8GB) | readme

- Project page

- If you use this dataset please cite:

Incorporating Side Information by Adaptive Convolution.

,

In: Neural Information Processing Systems, Long Beach, Dec 2017.

UCSD Pedestrian Dataset

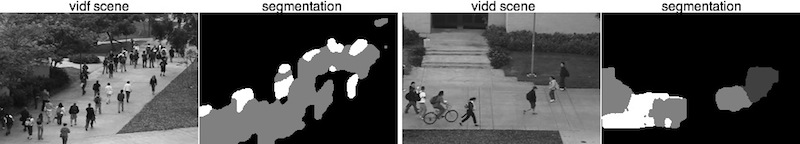

Video of people on pedestrian walkways at UCSD, and the corresponding motion segmentations. Currently two scenes are available.

- Files: vidf (787MB) | vidd (672MB) | readme

- If you use this dataset please cite:

Modeling, clustering, and segmenting video with mixtures of dynamic textures.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 30(5):909-926, May 2008.

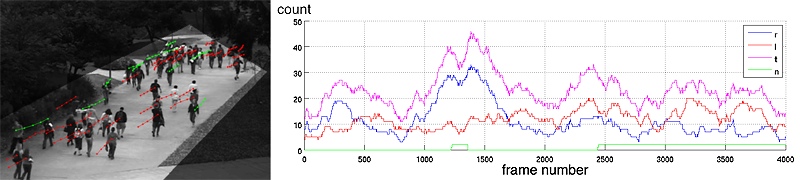

People Annotations for UCSD Dataset

People annotations, perspective density maps, region-of-interest, and crowd counts for the UCSD Pedestrian Dataset.

- Files: zip (5.3MB) | readme

- If you use this dataset please cite:

Counting People with Low-Level Features and Bayesian Regression.

,

IEEE Trans. on Image Processing (TIP), 21(4):2170-2177, May 2012.

People Counting Data for UCSD Dataset

The features and counts for people counting on the UCSD Dataset. This data should be sufficient if you are interested in the regression problem only. Includes the Peds1, Peds2, and CVPR counting datasets.

- Files: zip (7MB) | readme

- If you use this dataset please cite:

Counting People with Low-Level Features and Bayesian Regression.

,

IEEE Trans. on Image Processing (TIP), 21(4):2170-2177, May 2012.

People Counting Data for PETS2009 Dataset

The features and counts for people counting on the PETS2009 Dataset. Also includes the segmentations, perspective maps, and ground-truth annotations.

- Files: zip (4.2MB) | readme

- If you use this dataset please cite:

Analysis of Crowded Scenes using Holistic Properties.

,

In: 11th IEEE Intl. Workshop on Performance Evaluation of Tracking and Surveillance (PETS 2009), Miami, Jun 2009.

Line Counting Dataset

These are the ground-truth annotations for line counting on the UCSD, Grand Central, and LHI datasets.

- Files: zip (7 MB)

- If you use this dataset please cite:

Counting People Crossing a Line using Integer Programming and Local Features.

,

IEEE Trans. on Circuits and Systems for Video Technology (TCSVT), 26(10):1955-1969, Oct 2016.Crossing the Line: Crowd Counting by Integer Programming with Local Features.

,

In: IEEE Conf. Computer Vision and Pattern Recognition (CVPR), Portland, Jun 2013.

Human Pose Datasets

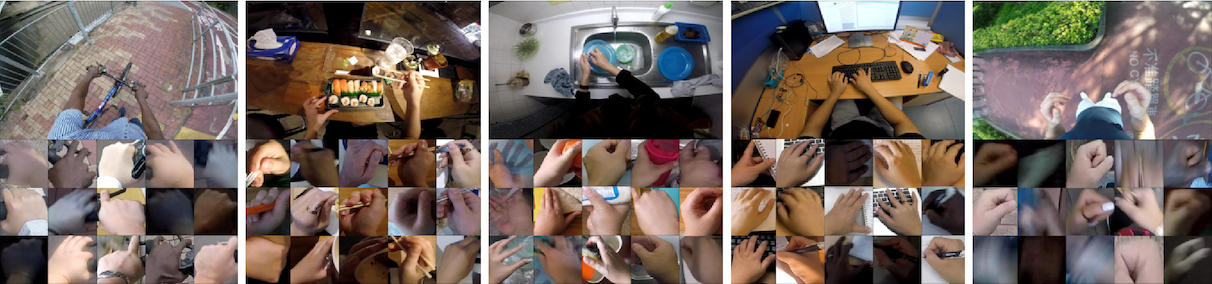

EgoDaily – Egocentric dataset for Hand Disambiguation

Egocentric hand detection dataset with variability on people, activities and places, to simulate daily life situations.

- Files: download page

- If you use this dataset please cite:

Is that my hand? An egocentric dataset for hand disambiguation.

,

Image and Vision Computing, 89:131-143, Sept 2019.

MADS: Martial Arts, Dancing, and Sports Dataset

A multi-view and stereo-depth dataset for 3D human pose estimation, which consists of challenging martial arts actions (Tai-chi and Karate), dancing actions (hip-hop and jazz), and sports actions (basketball, volleyball, football, rugby, tennis and badminton).

- Files: download here

- Project page

- If you use this dataset please cite:

Martial Arts, Dancing and Sports Dataset: a Challenging Stereo and Multi-View Dataset for 3D Human Pose Estimation.

,

Image and Vision Computing, 61:22-39, May 2017.

Video Datasets

Experimental setup for semantic video texture annotation on the DynTex dataset

Videos can be obtained from the DynTex website. The text files contain the list of selected tags, the list of selected videos and ground-truth tags, and the training/test set splits.

- Files: ground-truth | setup

- If you use this dataset please cite:

Clustering Dynamic Textures with the Hierarchical EM Algorithm for Modeling Video.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 35(7):1606-1621, Jul 2013.

Boats Videos

A video of boats moving through water. A challenging background subtraction task, where the background itself is moving.

- Files: zip (135MB) | readme

- If you use this dataset please cite:

Generalized Stauffer-Grimson background subtraction for dynamic scenes.

,

Machine Vision and Applications, 22(5):751-766, Sep 2011.

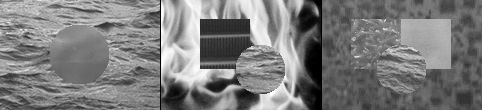

Synthetic Video Texture Dataset

A dataset of composite video textures. The videos were created by compositing different video textures together into a template with 2, 3, or 4 segments.

- Files: zip (212MB)

- If you use this dataset please cite:

Modeling, clustering, and segmenting video with mixtures of dynamic textures.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 30(5):909-926, May 2008.

Highway Traffic Dataset (Clustering)

A dataset of highway traffic videos used for clustering video textures.

- Files: zip (42MB)

- If you use this dataset please cite:

Modeling, clustering, and segmenting video with mixtures of dynamic textures.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 30(5):909-926, May 2008.

Highway Traffic Videos (Classification)

A set of highway traffic videos. Each video is classified as low, medium, or high traffic.

- Files: day videos tgz (60MB), night videos tgz (182MB)

- If you use this dataset please cite:

Probabilistic Kernels for the Classification of Auto-regressive Visual Processes.

,

In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), San Diego, Jun 2005.

Other Datasets

Dolphin-14k: Chinese White Dolphin detection dataset

A dataset consisting of Chinese White Dolphin (CWD) and distractors for detection tasks.

- Files: Google Drive, Readme

- Project page

- If you use this dataset please cite:

Chinese White Dolphin Detection in the Wild.

,

In: ACM Multimedia Asia (MMAsia), Gold Coast, Australia, Dec 2021.

Small Object Dataset

Images of small objects for small instance detections. Currently four object types are available.

- Files: zip (5.9 MB)

- If you use this dataset please cite:

Small Instance Detection by Integer Programming on Object Density Maps.

,

In: IEEE Conf. Computer Vision and Pattern Recognition (CVPR), Boston, Jun 2015.

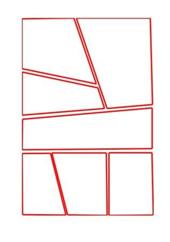

Manga Layout Dataset

Dataset of manga panel layouts.

- Files: zip

- If you use this dataset please cite:

Automatic Stylistic Manga Layout.

,

ACM Transactions on Graphics (Proc. SIGGRAPH Asia 2012), Singapore, Nov 2012.

Key annotations for the GTZAN music genre dataset

Ground-truth annotations of the musical keys of songs in the GTZAN music genre dataset.

- Files: zip

- If you use this dataset please cite:

Genre Classification and the Invariance of MFCC Features to Key and Tempo.

,

In: Intl. Conference on MultiMedia Modeling (MMM), Taipei, Jan 2011.

Code

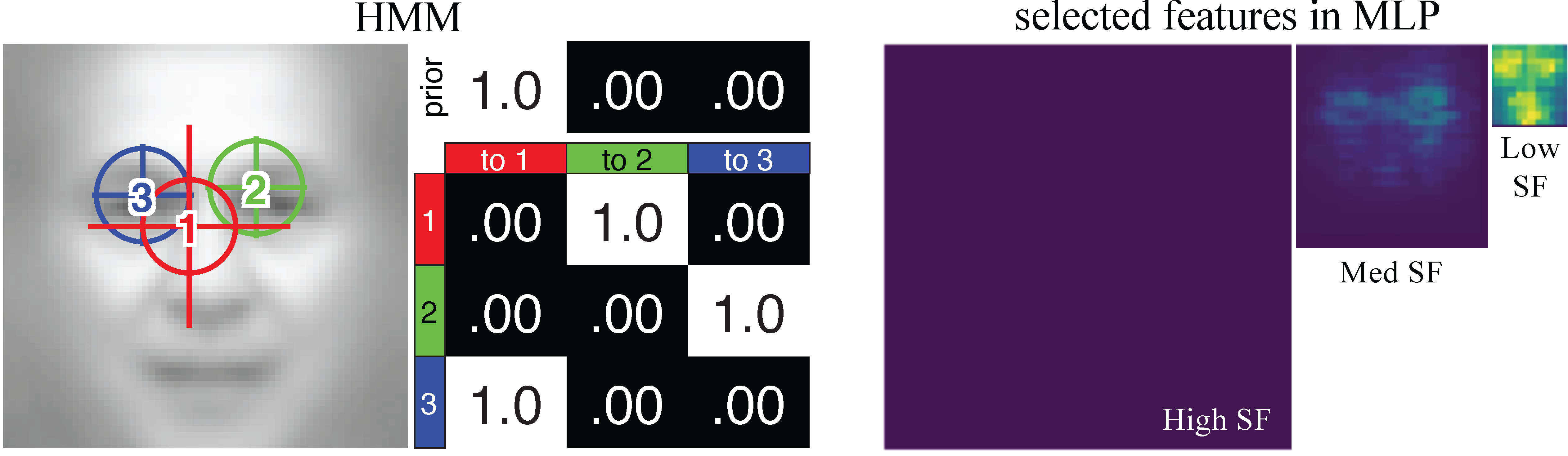

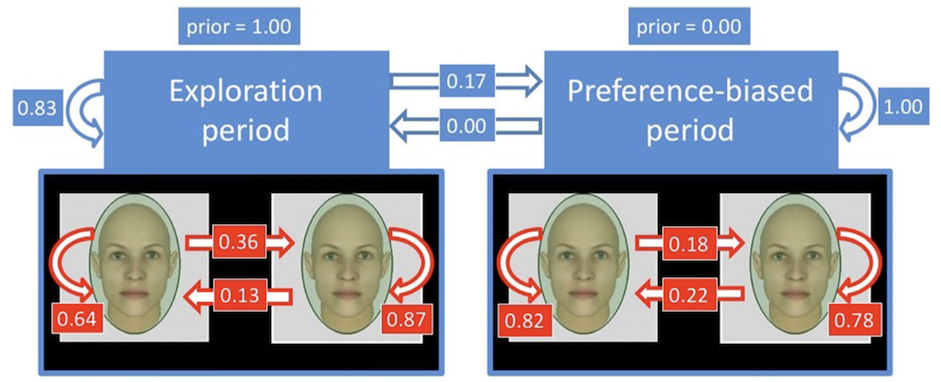

Modeling Eye Movements with Deep Neural Networks and Hidden Markov Models (DNN+HMM)

This is the toolbox for modeling eye movements and feature learning with deep neural networks and hidden Markov models (DNN+HMM).

- Files: download here

- Project page

- If you use this toolbox please cite:

Understanding the role of eye movement consistency in face recognition and autism through integrating deep neural networks and hidden Markov models.

,

npj Science of Learning, 7:28, Oct 2022.

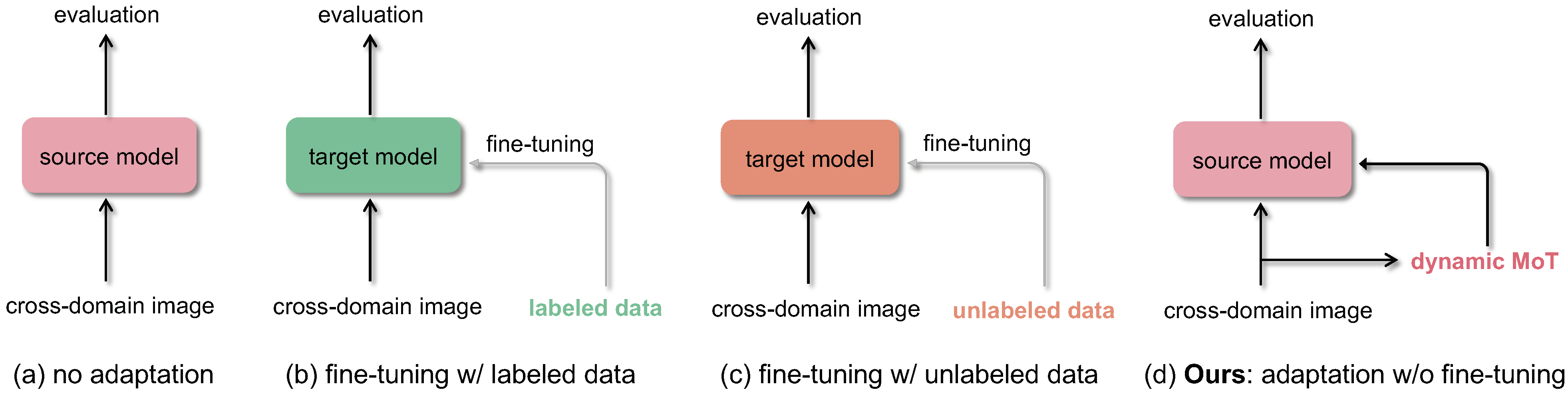

Crowd counting: Zero-shot cross-domain counting

Generalized loss function for crowd counting.

- Files: github

- Project page

- If you use this toolbox please cite:

Dynamic Momentum Adaptation for Zero-Shot Cross-Domain Crowd Counting.

,

In: ACM Multimedia (MM), Oct 2021.

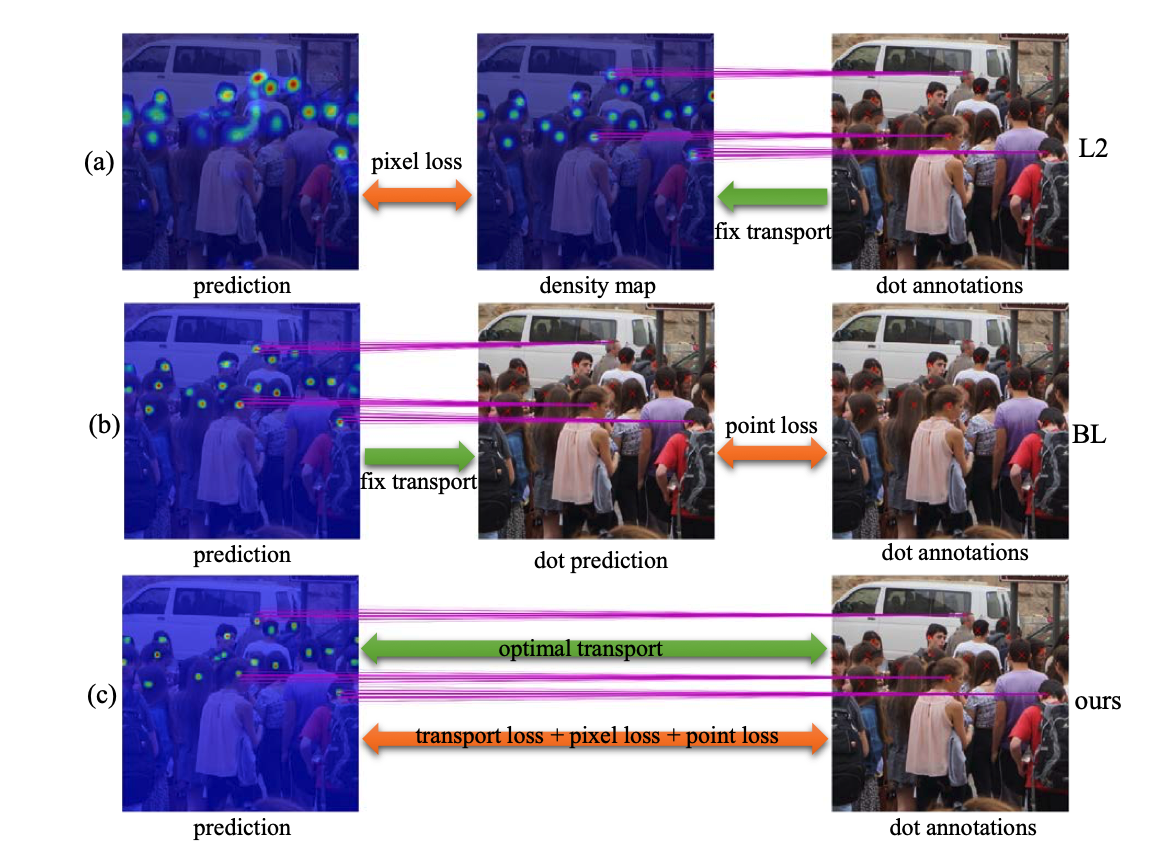

Crowd counting: Generalized loss function

Generalized loss function for crowd counting.

- Files: github

- Project page

- If you use this toolbox please cite:

A Generalized Loss Function for Crowd Counting and Localization.

,

In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Jun 2021.

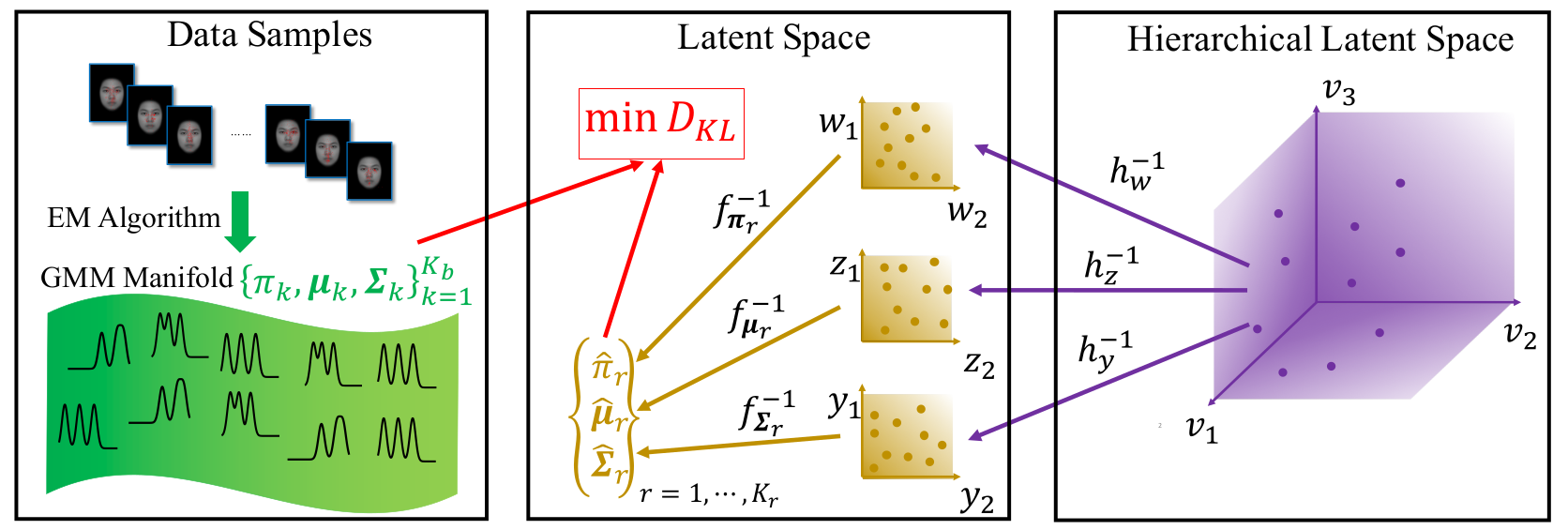

Parametric Manifold Learning of Gaussian Mixture Models (PRIMAL-GMM) Toolbox

This is a python toolbox learning parametric manifolds of Gaussian mixture models (GMMs).

- Files: download here

- Project page

- If you use this toolbox please cite:

PRIMAL-GMM: PaRametrIc MAnifold Learning of Gaussian Mixture Models.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 44(6):3197-3211, June 2022 (online 2021).

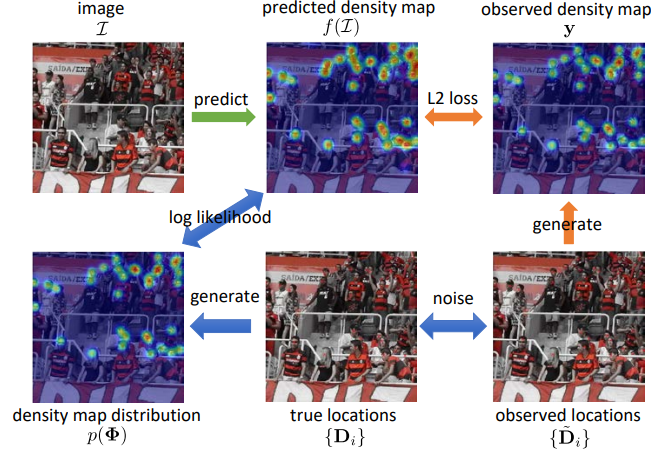

Crowd counting: Modeling noisy annotations

Modeling noisy annotations in crowd counting: NoisyCC.

- Files: github

- Project page

- If you use this toolbox please cite:

Modeling Noisy Annotations for Crowd Counting.

,

In: Neural Information Processing Systems (NeurIPS), Dec 2020.

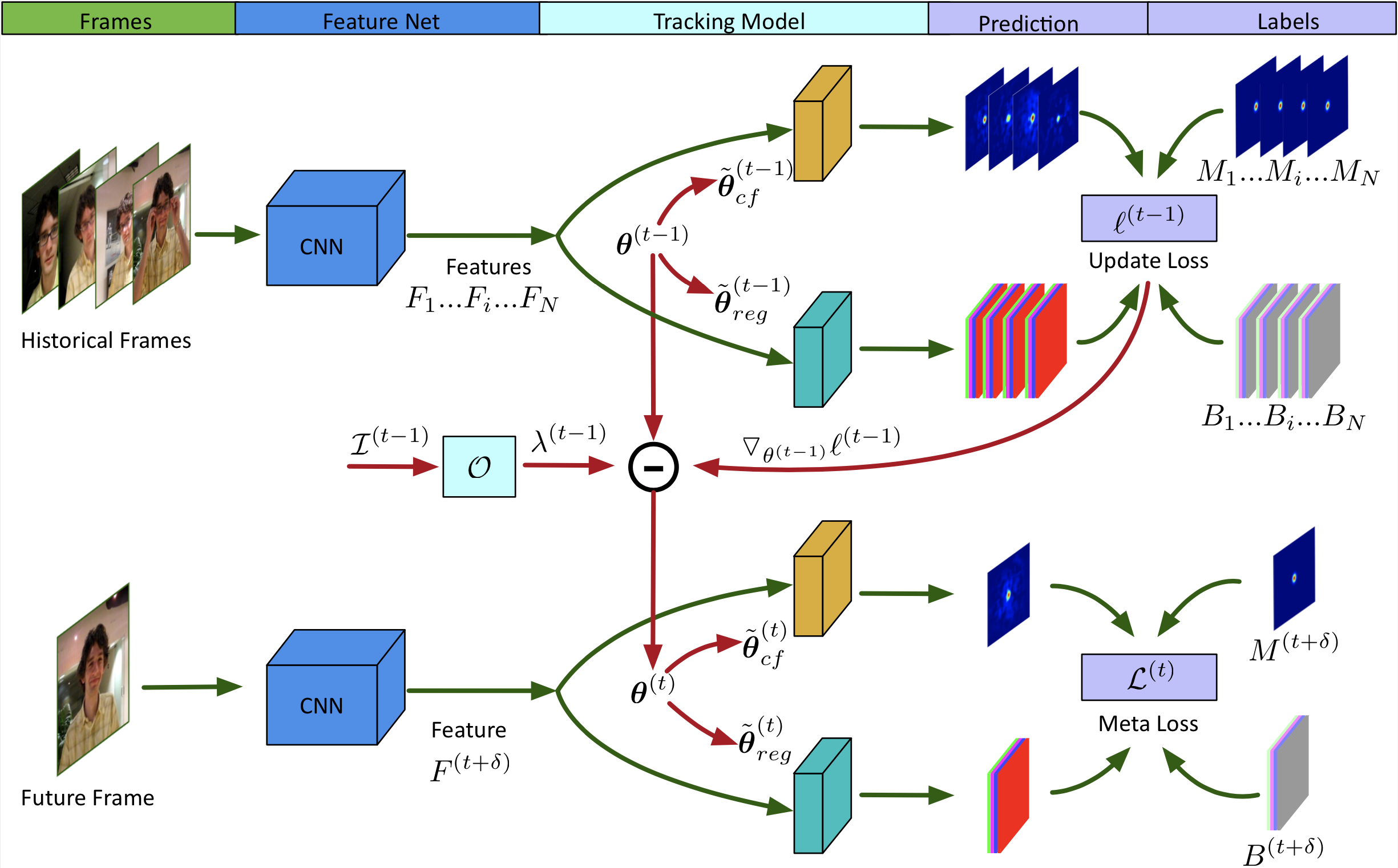

Visual Object Tracking: ROAM and ROAM++

Recurrently optimized tracking with ROAM and ROAM++.

- Files: github

- Project page

- If you use this toolbox please cite:

ROAM: Recurrently Optimizing Tracking Model.

,

In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, Jun 2020.

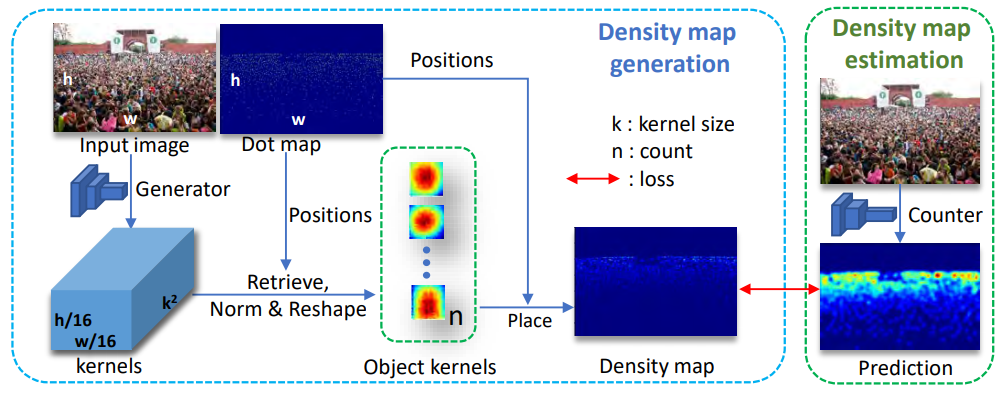

Crowd counting: Kernel-based density map generation

KDMG for crowd counting.

- Files: github

- Project page

- If you use this toolbox please cite:

Kernel-based Density Map Generation for Dense Object Counting.

,

IEEE Trans. Pattern Analysis and Machine Intelligence (TPAMI), 44(3):1357-1370, Mar 2022.

Eye Movement analysis with Switching HMMs (EMSHMM) Toolbox

This is a MATLAB toolbox for analyzing eye movement data using switching hidden Markov models (SHMMs), for analyzing eye movement data in cognitive tasks involving cognitive state changes. It includes code for learning SHMMs for individuals, as well as analyzing the results.

- Files: download here

- Project page

- If you use this toolbox please cite:

Eye movement analysis with switching hidden Markov models.

,

Behavior Research Methods, 52:1026-1043, June 2020.

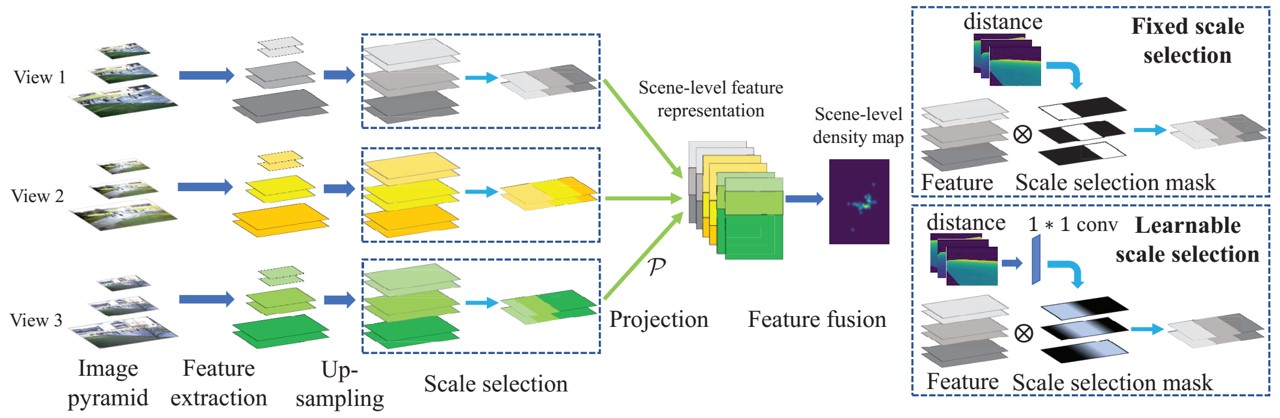

Crowd counting: Multi-view Multi-scale (MVMS) counting

The code/model for wide-area crowd counting using multiple views: multi-view multi-scale (MVMS) model.

- Files: github

- Project page

- If you use this toolbox please cite:

Wide-Area Crowd Counting via Ground-Plane Density Maps and Multi-View Fusion CNNs.

,

In: IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), Long Beach, June 2019.

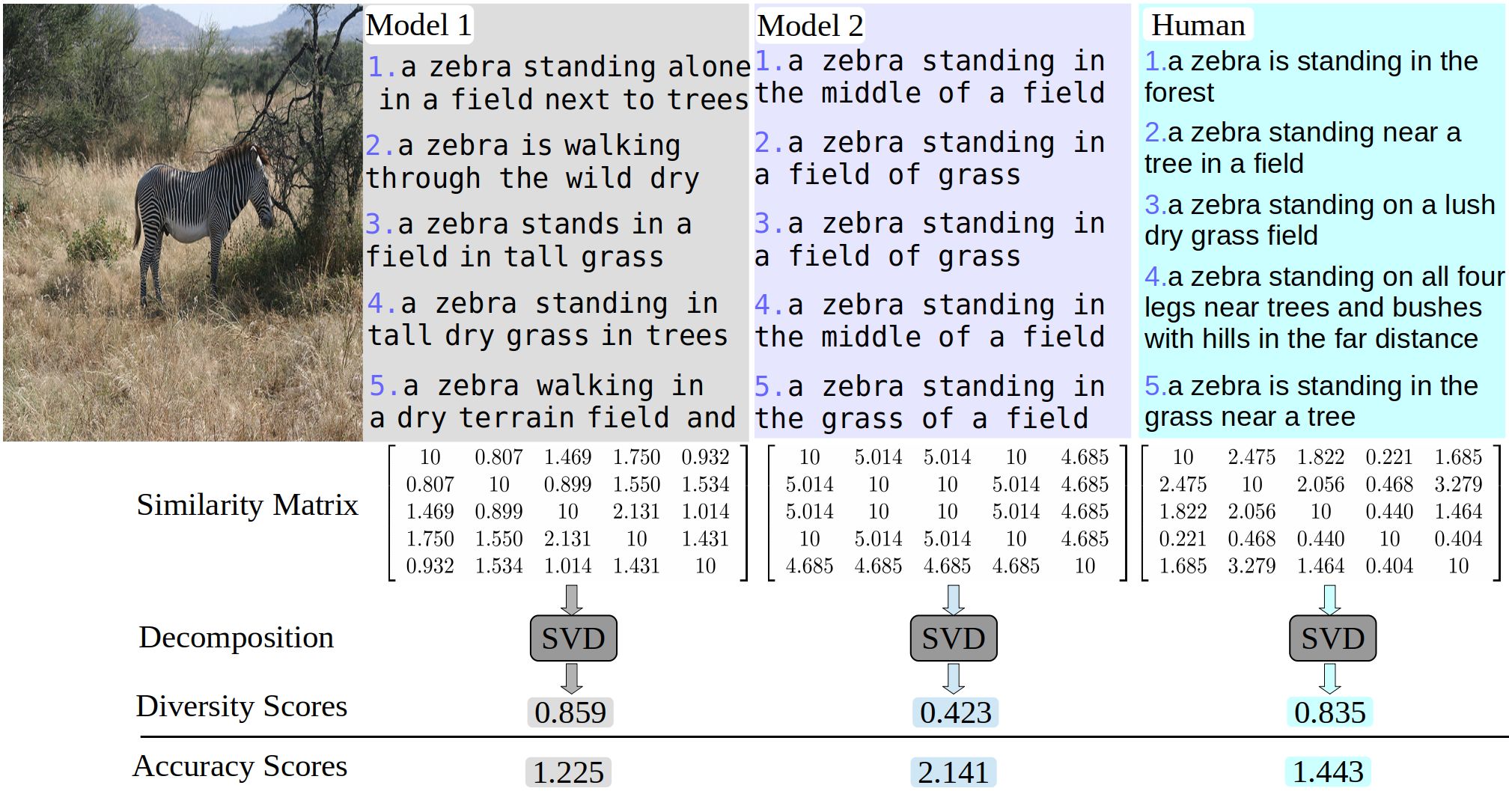

Image Captioning: Diversity Metrics

Toolbox for computing diversity metrics for image captioning.

- Files: github

- Project page

- If you use this toolbox please cite:

On Diversity in Image Captioning: Metrics and Methods.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 44(2):1035-1049, Feb 2022.

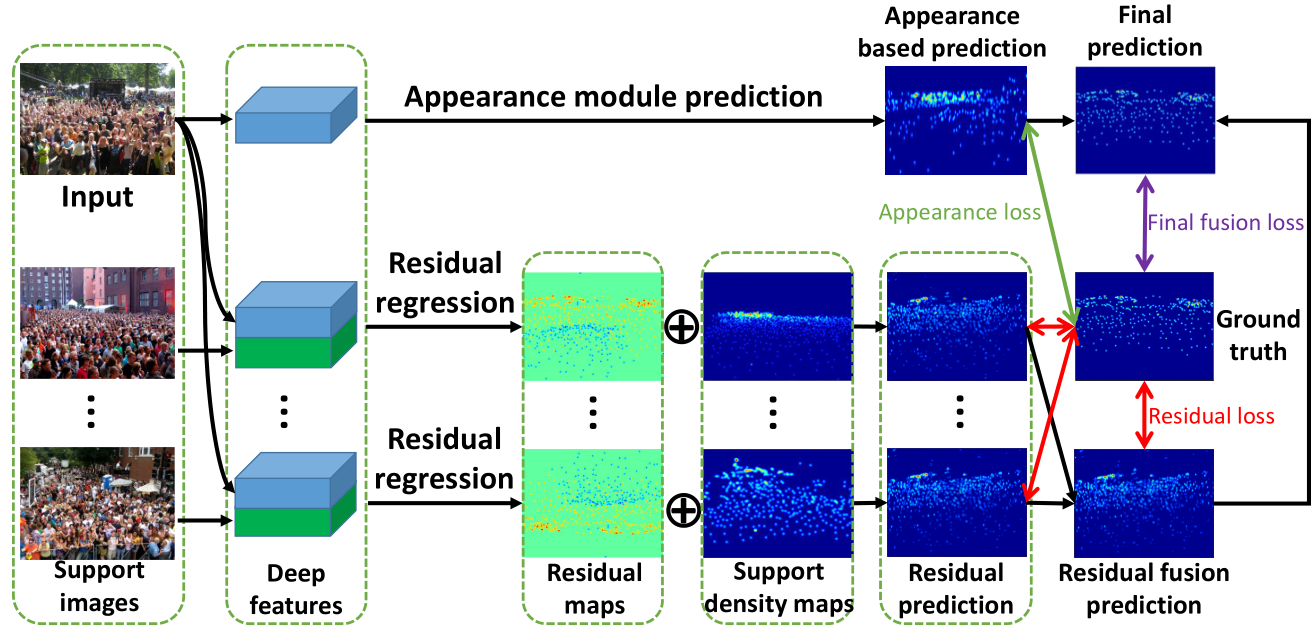

Crowd counting: residual regression with semantic prior

The code/model for crowd counting using residual regression and semantic prior.

- Files: github

- Project page

- If you use this toolbox please cite:

Residual Regression with Semantic Prior for Crowd Counting.

,

In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) , Long Beach, June 2019.

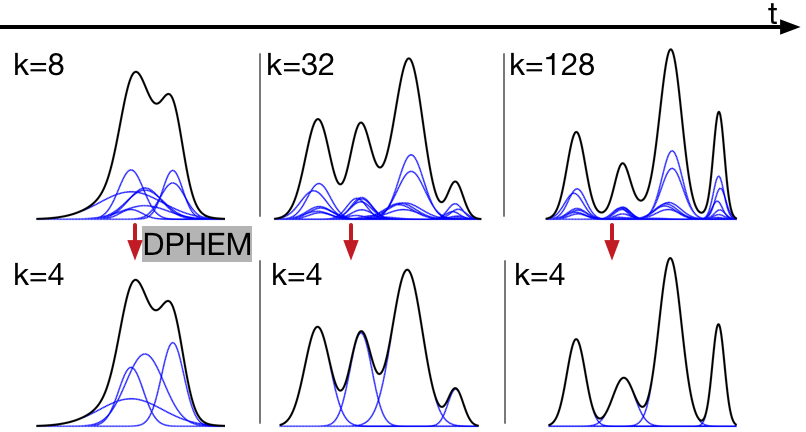

DPHEM toolbox for simplifying GMMs

Toolboxes for density-preserving HEM algorithm for simplifying mixture models.

- Files: Python toolbox (compatible with sklearn.mixture.GaussianMixture) and Matlab toolbox

- If you use this code please cite:

Density-Preserving Hierarchical EM Algorithm: Simplifying Gaussian Mixture Models for Approximate Inference.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 41(6):1323-1337, June 2019.

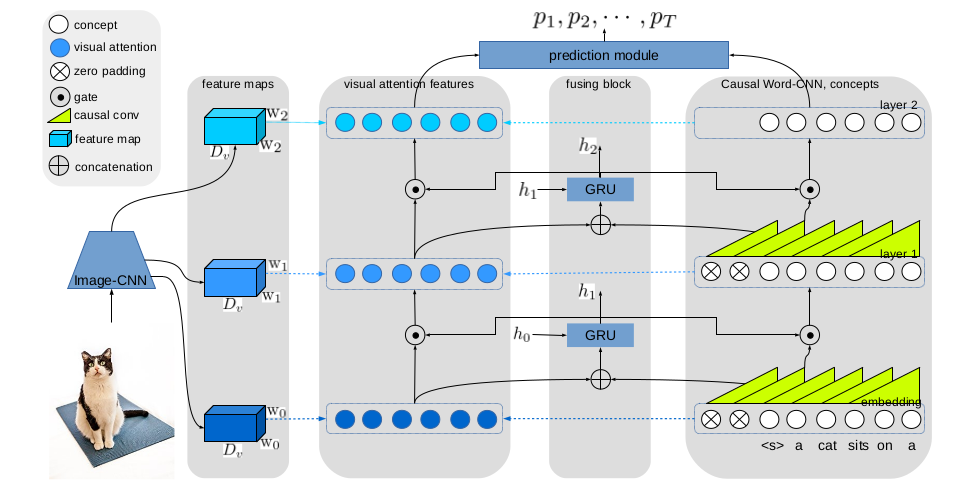

Image Captioning: Gated Hierarchical Attention

The code/model for GHA for image captioning.

- Files: github

- Project page

- If you use this toolbox please cite:

Gated Hierarchical Attention for Image Captioning.

,

In: Asian Conference on Computer Vision (ACCV), Perth, Dec 2018.

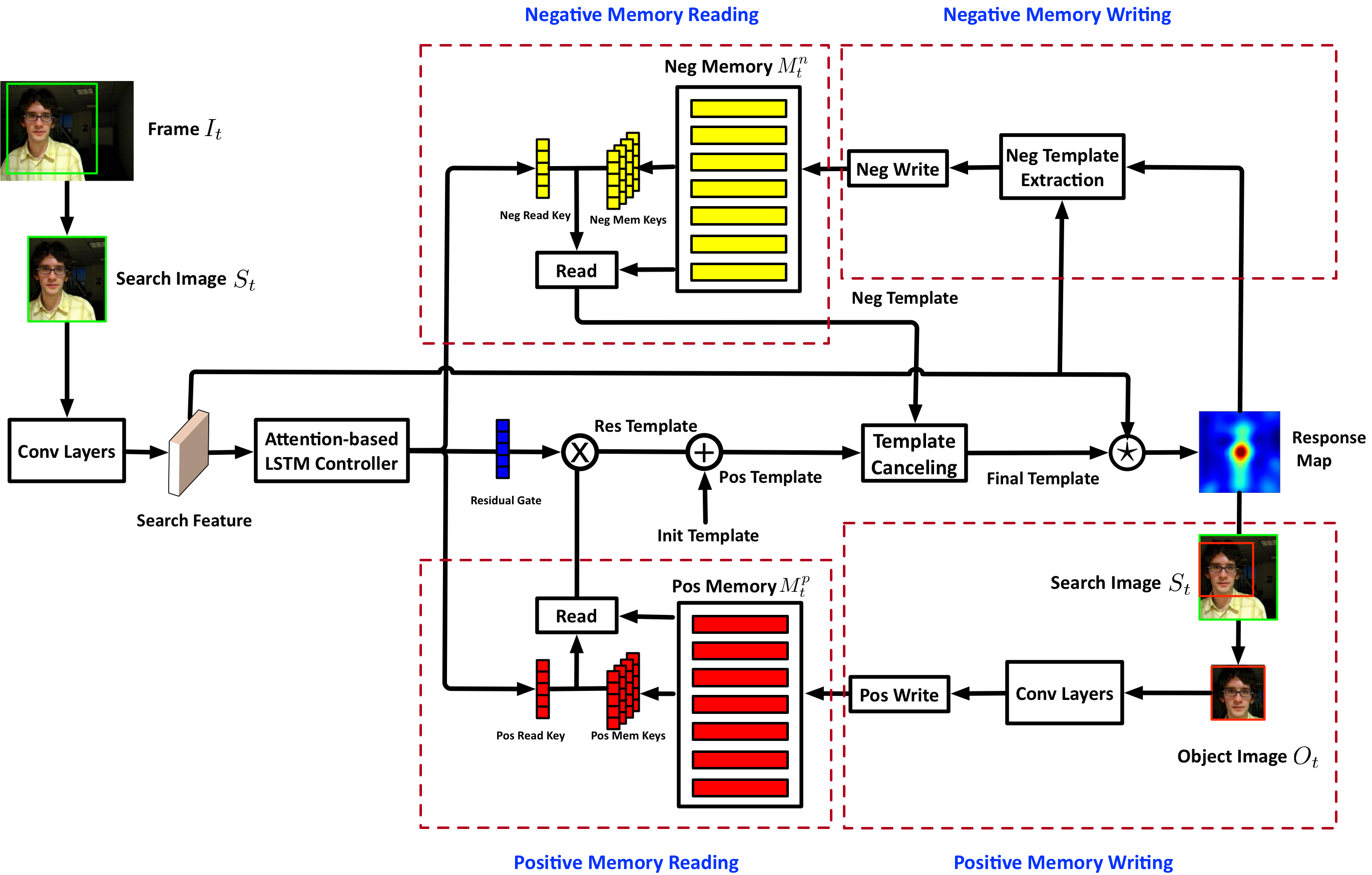

Visual Object Tracking: MemTrack and MemDTC

The code/models for MemTrack and MemDTC for visual object tracking

- Files: MemTrack (ECCV), MemDTC (TPAMI)

- Project page

- If you use this toolbox please cite:

Visual Tracking via Dynamic Memory Networks.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 43(1):360-374, Jan 2021.

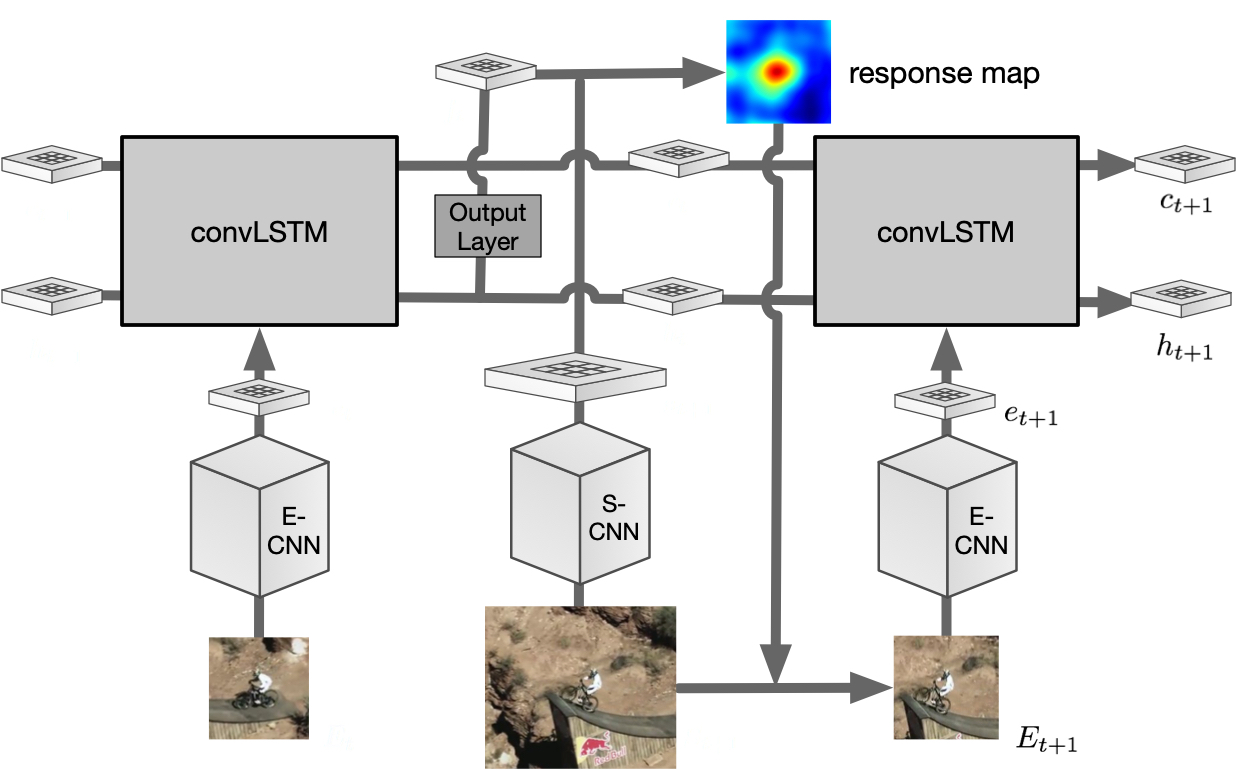

Visual Object Tracking: Recurrent filter learning

This is a code/model for Recurrent Filter Learning for VOT.

- Files: GitHub

- Project page

- If you use this toolbox please cite:

Recurrent filter learning for visual tracking.

,

In: ICCV 5th Visual Object Tracking Challenge Workshop VOT2017, Venice, Oct 2017.

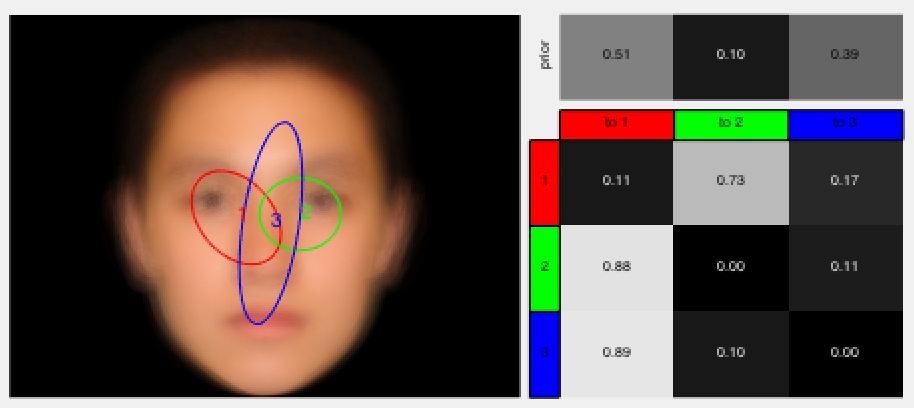

Eye Movement Hidden Markov Models (EMHMM) Toolbox

This is a MATLAB toolbox for analyzing eye movement data using hidden Markov models. It includes code for learning HMMs for individuals, as well as clustering indivduals’ HMMs into groups.

- Files: download here

- Project page

- If you use this toolbox please cite:

Understanding eye movements in face recognition using hidden Markov models.

,

Journal of Vision, 14(11):8, Sep 2014.

Manga panel extraction toolbox

This is a MATLAB toolbox for automatically extracting the panels from digital manga/comic pages.

- Files: zip

- If you use this code please cite:

VarBB Toolbox

The VarBB toolbox is an implementation for the variational branch-and-bound algorithm for Bregman ball trees (bb-trees). VarBB can speed up nearest neighbor search for generative models.

- Files: zip

- If you use this code please cite:

That was fast! Speeding up NN search of high dimensional distributions.

,

In: International Conference on Machine Learning (ICML), Atlanta, Jun 2013.

H3M toolbox

This is a MATLAB toolbox for clustering hidden Markov models using the variational HEM algorithm. The toolbox can also estimate HMM mixtures (H3M) using the EM algorithm.

- Files: zip

- If you use this code please cite:

Clustering hidden Markov models with variational HEM.

,

Journal of Machine Learning Research (JMLR), 15(2):697-747, Feb 2014.

libdt – OpenCV library for Dynamic Textures

This is an OpenCV C++ library for Dynamic Teture (DT) models. It contains code for the EM algorithm for learning DTs and DT mixture models, and the HEM algorithm for clustering DTs, as well as DT-based applications, such as motion segmentation and Bag-of-Systems (BoS) motion descriptors.

- Files: zip (v1.01) | readme

- If you use this code please cite:

- Modeling, clustering, and segmenting video with mixtures of dynamic textures.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 30(5):909-926, May 2008. - Clustering Dynamic Textures with the Hierarchical EM Algorithm for Modeling Video.

,

IEEE Trans. on Pattern Analysis and Machine Intelligence (TPAMI), 35(7):1606-1621, Jul 2013.

Generalized Gaussian Process Models Toolbox

This is a toolbox for generalized Gaussian process models (GGPM). The toolbox is implemented as an add-on to the GPML toolbox for Matlab/Octave. The toolbox contains likelihood functions for GGPMs, as well as a Taylor inference function. GPML version 3.4 is supported.

- Files: zip | readme

- If you use this code please cite:

Generalized Gaussian Process Models.

,

In: IEEE Conf. Computer Vision and Pattern Recognition (CVPR), Colorado Springs, Jun 2011.