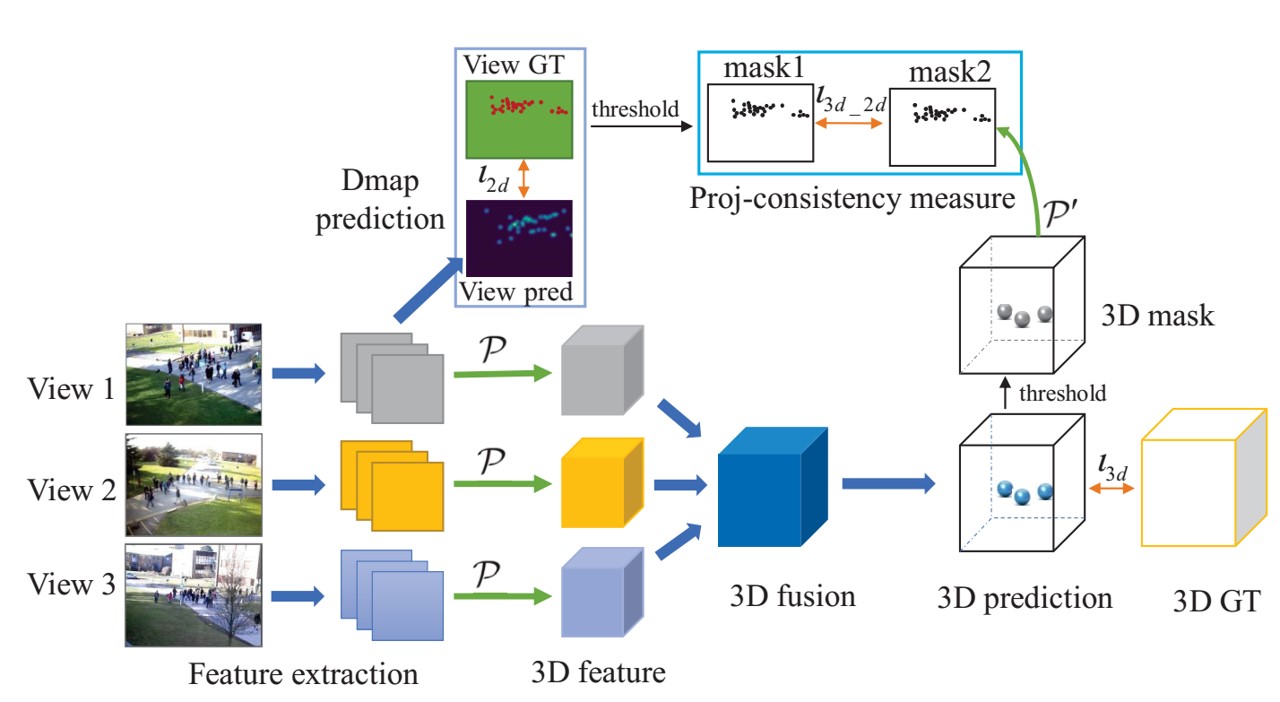

Crowd counting has been studied for decades and a lot of works have achieved good performance, especially the DNNs-based density map estimation methods. Most existing crowd counting works focus on single-view counting, while few works have studied multi-view counting for large and wide scenes, where multiple cameras are used. Recently, an end-to-end multi-view crowd counting method called multi-view multi-scale (MVMS) has been proposed, which fuses multiple camera views using a CNN to predict a 2D scene-level density map on the ground-plane. Unlike MVMS, we propose to solve the multi-view crowd counting task through 3D feature fusion with 3D scene-level density maps, instead of the 2D ground-plane ones. Compared to 2D fusion, the 3D fusion extracts more information of the people along z-dimension (height), which helps to solve the scale variations across multiple views. The 3D density maps still preserve the 2D density maps property that the sum is the count, while also providing 3D information about the crowd density. We also explore the projection consistency among the 3D prediction and the ground-truth in the 2D views to further enhance the counting performance. The proposed method is tested on 3 multi-view counting datasets and achieves better or comparable counting performance to the state-of-the-art.

Selected Publications

,

International Journal of Computer Vision (IJCV), 130:3123-3139, Dec 2022.

,

In: AAAI Conference on Artificial Intelligence, AAAI, New York, Feb 2020. [supplemental]