In this paper, we study masked autoencoder (MAE) pre-training on videos for matching-based downstream tasks, including visual object tracking (VOT) and video object segmentation (VOS). A simple extension of MAE is to randomly mask out frame patches in videos and reconstruct the frame pixels. However, we find that this simple baseline heavily relies on spatial cues while ignoring temporal relations for frame reconstruction, thus leading to sub-optimal temporal matching representations for VOT and VOS. To alleviate this problem, we propose DropMAE, which adaptively performs spatial-attention dropout in the frame reconstruction to facilitate temporal correspondence learning in videos. We show that our DropMAE is a strong and efficient temporal matching learner, which achieves better fine-tuning results on matching-based tasks than the ImageNet-based MAE with 2× faster pre-training speed. Moreover, we also find that motion diversity in pre-training videos is more important than scene diversity for improving the performance on VOT and VOS. Our pre-trained DropMAE model can be directly loaded in existing ViT-based trackers for fine-tuning without further modifications. Notably, DropMAE sets new state-of-the-art performance on 8 out of 9 highly competitive video tracking and segmentation datasets. Our code and pre-trained models are available at https://github.com/jimmy-dq/DropMAE.git.

Motivation

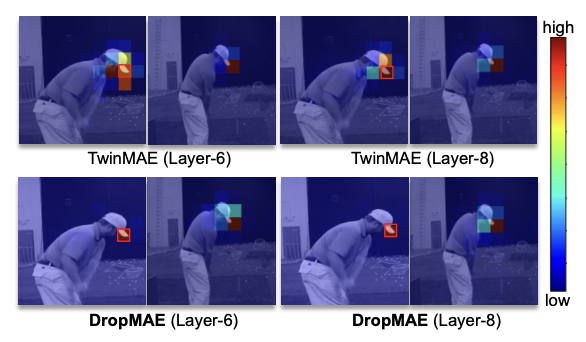

- The baseline TwinMAE reconstruction heavily relies on within-frame patches or spatial cues, which may lead to sub-optimal temporal representations for matching-based video tasks.

- By alleviating the aforementioned problem, our proposed DropMAE improves the baseline by effectively alleviating co-adaptation between spatial cues in the reconstruction, focusing more on temporal cues, thus achieving better learning of temporal correspondences for VOT and VOS.

Videos-VOT

Videos-VOS

Poster

Presentation

Selected Publications

- DropMAE: Masked Autoencoders with Spatial-Attention Dropout for Tracking Tasks.

,

In: IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), Jun 2023. [code]

Results/Code