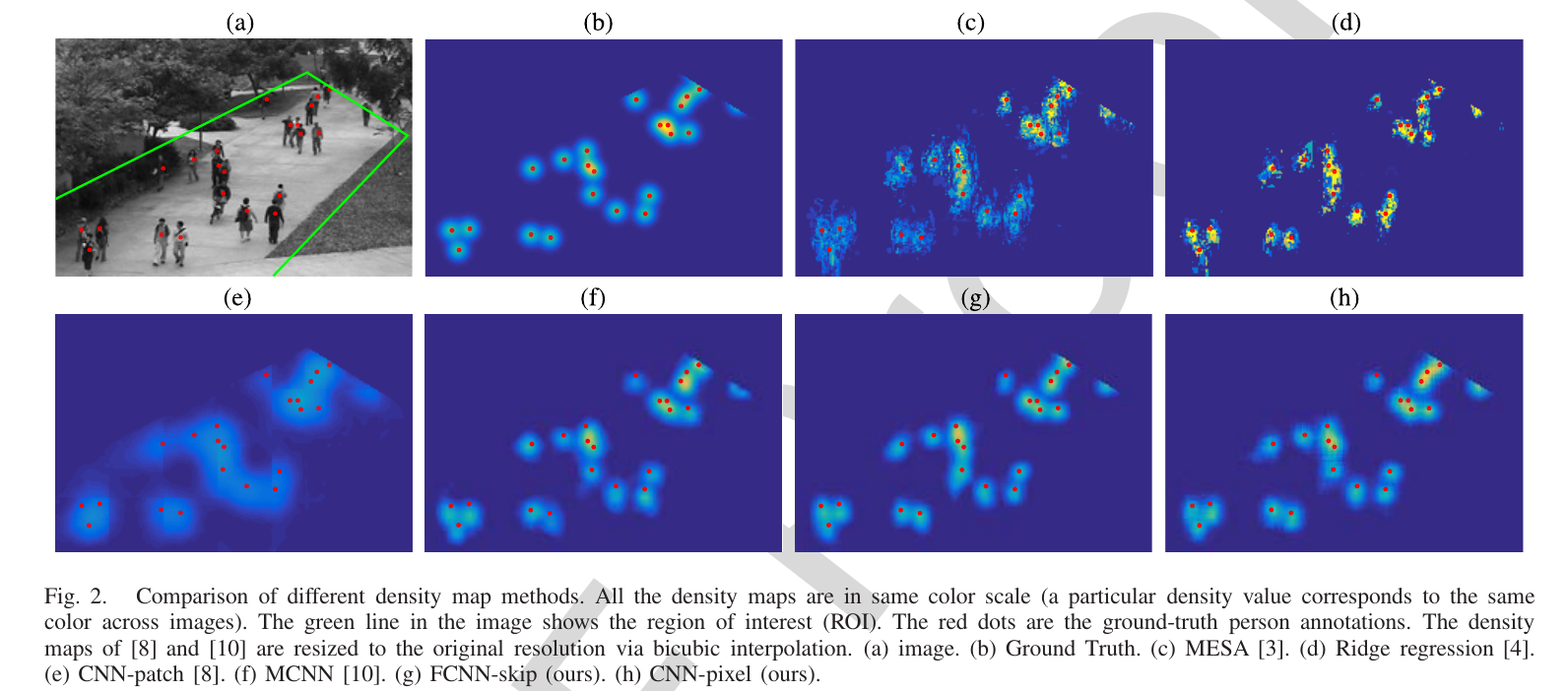

For crowded scenes, the accuracy of object-based computer vision methods declines when the images are low-resolution and objects have severe occlusions. Taking counting methods for example, almost all the recent state-of-the-art counting methods bypass explicit detection and adopt regression-based methods to directly count the objects of interest. Among regression-based methods, density map estimation, where the number of objects inside a subregion is the integral of the density map over that subregion, is especially promising because it preserves spatial information, which makes it useful for both counting and localization (detection and tracking). With the power of deep convolutional neural networks (CNNs) the counting performance has improved steadily. The goal of this paper is to evaluate density maps generated by density estimation methods on a variety of crowd analysis tasks, including counting, detection, and tracking. Most existing CNN methods produce density maps with resolution that is smaller than the original images, due to the downsample strides in the convolution/pooling operations. To produce an original-resolution density map, we also evaluate a classical CNN that uses a sliding window regressor to predict the density for every pixel in the image. We also consider a fully convolutional (FCNN) adaptation, with skip connections from lower convolutional layers to compensate for loss in spatial information during upsampling. In our experiments, we found that the lower-resolution density maps sometimes have better counting performance. In contrast, the original-resolution density maps improved localization tasks, such as detection and tracking, compared to bilinear upsampling the lower-resolution density maps. Finally, we also propose several metrics for measuring the quality of a density map, and relate them to experiment results on counting and localization.

Selected Publications

- Beyond Counting: Comparisons of Density Maps for Crowd Analysis Tasks - Counting, Detection, and Tracking.

,

IEEE Trans. on Circuits and Systems for Video Technology (TCSVT), 29(5):1408-1422, May 2019.

Demos/Results